Many countries evaluate publicly funded research with formal periodic exercises (see Wang et al. 2014 for a recent survey). Among the pioneers in this field, the UK has conducted several such exercises since 1986, as explained in detail on the RAE2008 website. The aim of these exercises is threefold: (i) to ensure accountability for taxpayer funded investment in research, (ii) to produce public evidence of its benefits, and (iii) to guide the selective allocation of the annual ‘block’ budget for research to institutions.

These exercises are very costly. A substantial part of this cost is the time of the over 1,000 assessors, divided into 36 panels, whose task is to assess research in each specific discipline. The panels assess the contribution of the academics submitted by each institution in three areas: research output, environment, and impact. Output is assessed through the evaluation of scholarly work (such as books or journal articles), published in the period since the previous assessment. Each academic submitted is required to list four different items, and the panels attribute to each item they receive a measure of quality on a five-point scale (ranging from 4* “quality that is world-leading in terms of originality, significance and rigour”, to 0* “quality that falls below the standard of nationally recognised work”). Evaluation is by peer review, each item being subjectively assessed by some members of the panel. With close to 200,000 outputs to assess, it is easy to see how costs may escalate rapidly.

In an attempt to reduce the cost, the British funding agency ran a pilot study to potentially replace peer review with an evaluation based on a bibliometric algorithm, but concluded, partly following the sentiments of the academic community, that this method is “not sufficiently robust […] to replace expert review in the [evaluation]” (HEFCE 2009). This is despite the fact that many other countries conduct their research evaluation using a bibliometric algorithm, a mechanical method for attributing a score to publication based on measurable objective parameters such as the number of citation and the quality of the journal where it is published (Wang et al. 2014).

In a paper (Checchi et al. 2019) to be presented at the European Economic Association conference in Manchester on 28 August, we investigate whether a sophisticated bibliometric algorithm could have replaced the assessment obtained via peer review in the last evaluation of the research conducted by British universities, the REF2014.

In recent contributions in the same vein, Mryglod et al. (2015) and Harzing (2014) use a departmental h-index (a measure developed by Hirsch 2010) to measure the academic impact of a given set of publications – for example, all those by a given person, by a given journal, or by a given department. These papers, while limited to some subjects only, found high correlation of this method of ranking institutions and the REF. As well of the small range of topics they covered, they did not evaluate the same submissions made to the REF, for two types of reasons. First, institutions could choose which academics to submit to the REF, and to which panels. Thus, it is possible that a researcher listed in a departmental website was not submitted to the REF, or was submitted to a different panel from the rest of her colleagues in the department. Second, even when the list of academics submitted is the same as listed on the departmental website, the list of papers is unlikely to be. For each person four papers needed to be submitted, and so a prolific author could only have four papers included in the departmental submission, while a less productive one would have 0* outputs recorded in lieu of the missing papers.

In our exercise, we downloaded directly all the outputs submitted to the REF, which are available from the REF website (www.ref.ac.uk/2014) as Excel files. We then assessed these outputs, whenever possible, using the bibliometric method adopted by the Italian research evaluation agency (ANVUR) for the evaluation of the research carried out by academics working in some research areas in Italian universities, called VQR. With this method, each paper is assigned a score, based on two measures: (i) the impact factor of the journal where it is published, relative to the impact factor of journals in the same research area, and (ii) the number of citations the paper has received, again relative to the citations by papers written in the same research area. The relative weight of these measures is adjusted by the panels according to the year of publication. This is to take into account the facts that older papers have had more time to gather up citations, and that in some research areas citations are more persistent while in others they cease relatively soon after publication. Following the methodology of the Italian agency, only articles published in academic outlets included in the Scopus catalogue were assessed. Thus from 190,962 outputs, we can assess almost 140,000 journal articles. The remaining outputs, around one third of the total, are articles not included in Scopus, books or chapters in books, and a tiny fraction of more unusual outputs, such as compositions, patents, exhibitions, or scholarly editions.

Effectively, we assessed the outputs as if they had been submitted by an Italian institution to the Italian evaluation exercise. Doing so required us to assign each journal to an area of reference – this is because the number of citations and the journal impact factors are assessed relative to the overall worldwide distribution of citations and impact factors in the world. For example, a 2012 mathematics paper with 40 citations could be in the 97th percentile of the distribution of maths papers for that year, whereas a 2010 psychology paper also with 40 cites could be in the 45th percentile of the psychology world distribution of citations for that year. While the bibliometric algorithm was used only by some of the Italian panels, we were able to allocate all the Scopus journals to at least one area, based on the publication patterns of the authors of other papers in the same area.

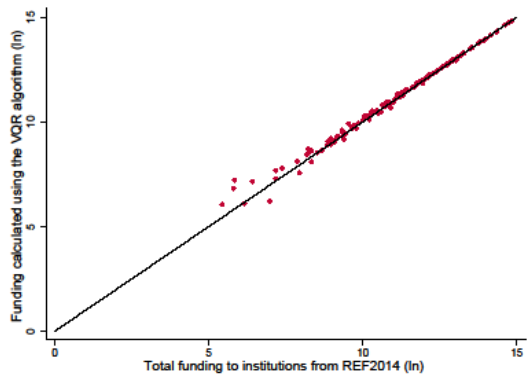

Figure 1

Note: Institutional annual funding of the UK institutions, allocated according to the REF results (horizontal axis) and the funding that would be determined if outputs had been assessed using the VQR bibliometric algorithm (vertical axis). Axes are in logarithmic scales, and when a dot is on the diagonal line, the institution represented by it would receive the same funding under both mechanisms.

The results we obtain are startling. In particular, the allocation of the government funding to institutions that would have been obtained is essentially identical to that determined by the rules used by the REF2014. Mathematically, the correlation between the two methods is 0.9997. This is illustrated in Figure 1, where the axes measure the (natural log of the) total annual funding allocated to universities, in practice, with the REF, and in theory, had the VQR algorithm been used instead. When a point is on the diagonal, the funding would be exactly the same with the two methods. As one can see from the figure, using the VQR algorithm would alter funding noticeably only in some of the smallest institutions, mostly specialists such as agricultural colleges, art, or music schools, or smaller institutions specialising in teaching, but hardly at all in every other case.

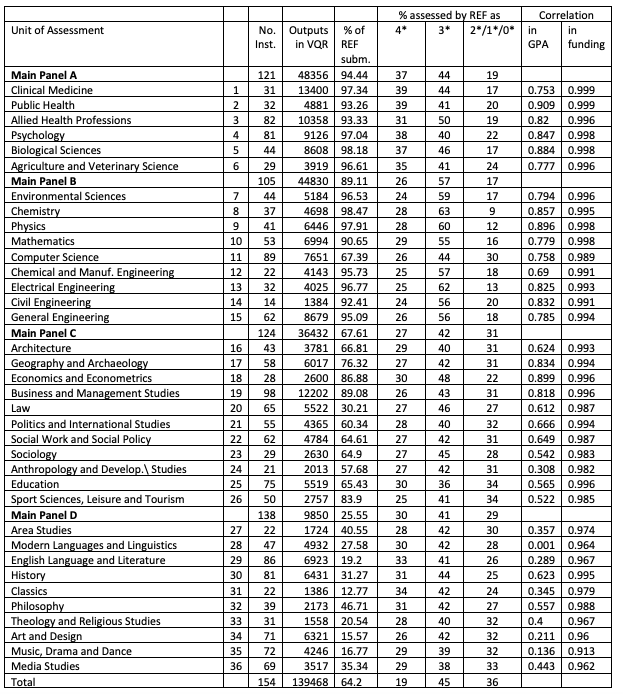

Table 1

Note: The columns in the table report the name and number of the units of assessment, grouped in their respective main panels, the number of institutions submitted to that panel, the percentage of the output submitted which could be assessed with the VQR bibliometric algorithm, and the percentage of the outputs submitted which were assessed by the REF panel as 4, 3, and 2, 1, or 0 stars. The last two columns show the correlation between the results obtained by the institutions in each panel in the REF, and the results which would have been obtained had their outputs been assessed with the VQR bibliometric algorithm. The two columns differ in that one calculate the average number of stars of the outputs submitted, and the other the total funding that the output submitted determines.

Our paper also reports data disaggregated by departments. The correspondence between the measures obtained in the REF and those that we calculated using the Italian bibliometric algorithm is less precise. This is not surprising: the funding to institutions is an aggregation of the funding accruing as a consequence of the performance of all their departments, and discrepancies in the two assessment methods (in different directions) tend to cancel out. If not as striking as in the aggregate case, the correlation between the two methods remains however extraordinarily high, ranging from 0.999 for medicine to 0.913 for music. That is, even when one is interested in the performance of different institutions in each specific discipline, there is very little difference between the outcome of the British REF peer review evaluation and that of the bibliometric algorithm used by the Italian VQR. This is particularly striking in view of the fact that, in some research areas, mostly in the arts and humanities, we were able to use the VQR bibliometric algorithm only for a small percentage of the outputs submitted to the REF by the British institutions: as the fifth column of Table 1 shows. We attribute the high correlation between the REF and the VQR evaluations, even for areas where we could assess only few outputs, to the high correlation in the quality of the bibliometrically measurable output and of the output which could not be assessed in this way – in other words, members of a department which publish in high quality journals also publish high quality books, which are then positively assessed in the peer review.

While our analysis should only be seen at most as a dry run, we think it does suggest a possible route to be followed in a light touch, cost effective evaluation of research. It is particularly accurate when comparing size sensitive measures, and when aggregating individual departments into an institutional ranking.

References

Checchi, D, A Ciolfi, G De Fraja, I Mazzotta, and S Verzillo (2019), "Have You Read This? An Empirical Comparison of the British REF Peer Review and the Italian VQR Bibliometric Algorithm", CEPR Discussion Paper DP13521.

Harzing, A-W (2014), "Running the REF on a rainy Sunday afternoon: Can we exchange peer review for metrics?", paper presented at the 23rd International Conference on Science and Technology Indicators (STI 2018), Leiden.

HEFCE (2019), "Report on the pilot exercise to develop bibliometric indicators for the Research Excellence Framework", Higher Education Funding Council for England.

Hirsch, J (2010), "An index to quantify an individual’s scientific research output that takes into account the effect of multiple coauthorship", Scientometrics 85(3): 741-754.

Mryglod, O, R Kenna, Y Holovatch, and B Berche (2015), "Predicting results of the Research Excellence Framework using departmental h-index", Scientometrics 102(3): 2165-2180.

Wang, L, P Vuolanto, and R Muhonen (2014), "Bibliometrics in the research assessment exercise reports of Finnish universities and the relevant international perspectives".

Endnotes

[1] For an overview of the sources of university revenues in the UK, see https://www.universitiesuk.ac.uk/policy-and-analysis/reports/Pages/university-funding-explained.aspx. Detailed information of how public funds are allocated to UK universities can be found at www.hesa.ac.uk/stats-finance. The full set of REF rules, the identity of the reviewers, and the outcomes are all available at www.ref.ac.uk.