Despite the frequent disputes about whether antitrust policy is too lax or too stringent towards mergers and the importance of mergers to the US economy, US policymakers lack any systematic quantitative study of the effectiveness of US merger policy. Instead, policymakers rely on the qualitative assessment of scholars and experienced practitioners. For example, the recent bipartisan Antitrust Modernization Commission, a Congressional Commission of which I was a member, reached the conclusion that US antitrust policy was sound based primarily on the impressions of experienced economists and antitrust lawyers.

To understand how shocking our lack of knowledge about merger policy is, contrast it to the Federal Reserve’s efforts to control inflation. Suppose that the Federal Reserve did not have any price statistics to rely on and instead surveyed some very well-respected consumers in order to measure inflation and its policies. “So, Mr. Jones, you are well-known to be a thoughtful shopper, please tell me how much you think prices have risen? Is the Fed doing a good job or a bad job at controlling inflation?” Most readers would find it comical to conceive of the Fed using such information to measure the effectiveness of its inflation policy, yet that is the situation in which we find ourselves in judging the effectiveness of merger policy.

Despite the lack of quantitative evidence, passions often run high when discussing merger policy. “Too lax” according to the New York Times, but “too stringent” according to the Wall Street Journal. (It is possible to gather numbers on enforcement actions to see how one antitrust administration compares to another, but such comparisons tell one nothing about the right level of enforcement.) It seems as if economic analysis has little to contribute to the debate about the effectiveness of merger policy. This sorry state of affairs can and should be remedied.

One might think that retrospective merger studies would be the remedy. This is false. As I explain below, only a study using a combination of data from a retrospective merger study together with data from the government antitrust authority’s predictions of that merger will enable a thorough evaluation of the effectiveness of US merger policy. But before I explain how to do such an analysis, let me first explain what does not qualify as a systematic study of merger policy.

What is not a systematic study

There have been some studies that try to see what happens after a particular merger is consummated. Did prices rise or not? Such an analysis is informative about the decision in that particular merger but at most one such study really cannot generate much insight about merger policy. The reason is simple. One study cannot tell you much about a systematic bias in policy; it can tell you only whether ex post, a particular merger lowered prices. More importantly, if the purpose of the analysis is to check whether the government is failing to predict accurately whether mergers will raise prices, then one must recognise that even if government’s ex ante predictions of price are accurate on average, in any particular case they will turn out to be too high half the time and too low half the time. One study will generally tell you nothing about systematic bias in government merger policy.

The limits of retrospective merger studies

So, you may respond, “I will not do one study, I will do many. If I see that for most mergers, prices subsequently rise, then I will conclude that merger policy is too lax, while if the reverse is true, I will conclude that it is too stringent.” Although I applaud doing more retrospective merger studies, the conclusions I just stated are only half-right. It is true that if I find that prices rise on average after mergers, then merger policy is too lax. But it is not true that if I find that prices fall on average after mergers, that merger policy is too stringent. The reason is a bit subtle but it is the stuff for which Nobel Prizes are awarded, as my colleague James Heckman can attest. The reason has to do with what Heckman calls a self-selected sample. Here is how it works.

The government antitrust agency analyses a potential merger and makes a prediction, ΔP*, of what the price change will be after the merger. To keep things simple, assume the government blocks any merger in which the predicted price change is positive. The sample of observed mergers is a self-selected sample in which ΔP* is non-positive. Now, to illustrate my point, suppose the government is an accurate predictor of price changes – i.e., there is no systematic bias in the government’s prediction of price. Then a randomly (or even non-randomly) selected sample of completed mergers will generally reveal an average ΔP that is negative. Yet it is wrong to conclude from this that merger policy is too stringent. Retrospective merger studies, valuable as they are, will tend to find that merger policy is too stringent even when it is not. Self-selection creates a bias towards finding ex post price decreases from mergers and therefore such a finding provides no basis to conclude that merger policy is too stringent.

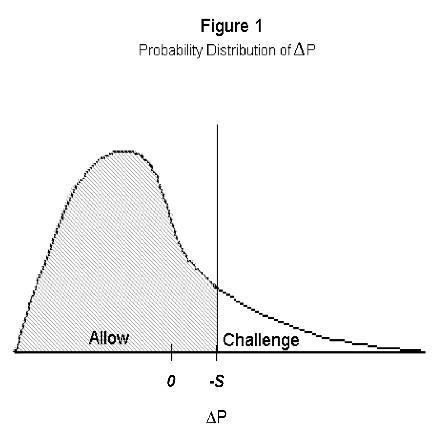

But it gets worse. Suppose that the government is making a systematic error and that its prediction, ΔP*, always mispredicts the actual ΔP by some amount, S, so that the government will block a merger if ΔP + S exceeds 0, or if ΔP > -S. If S > 0, the government always overestimates the actual price change, ΔP, and is too stringent in blocking mergers, while if S < 0, the government always underpredicts the actual price change and is too lax. In the diagram below, I have drawn a distribution of ΔP for all mergers that are proposed. Suppose S < 0, so that the government always underpredicts the actual price change. The shaded region to the left of the vertical line at ΔP = -S represents the ΔPs of the observed mergers that are allowed to proceed.

What follows from Figure 1 is that even if the government is too lax in allowing mergers to occur because it systematically underestimates the actual price change, a random sample (or even a census) of retrospective merger studies (i.e., a study of observed mergers) may be unable to detect that S < 0. That is, the average ΔP of allowed mergers could easily be negative even if S < 0 and too many mergers are being allowed!

The upshot of this reasoning is that although we don’t have very many retrospective merger studies and I would like to see more, they are quite limited in what they can tell us. True, if they on average show price increases, we can conclude merger policy is too lax. But the reverse is not true because merger policy can be too lax, even if one finds that, on average, mergers lower price! This means that, on average, these retrospective studies are biased in the sense of being unable to detect when merger policy is too lax. This sounds bad, but there is a way to do a proper analysis of the effectiveness of merger policy. It involves using more data.

What should be done?

There are several ways to remedy the above shortcomings of retrospective studies. First, one can, after specifying certain assumptions about error terms, construct a model that properly takes account of the self-selected sample of observed mergers. This is an approach that is feasible to do with the data that one has to do the retrospective study. This approach strikes me as an intellectually interesting and challenging exercise, perhaps even worth a PhD thesis, but its value is limited compared to the approach that I describe next.

The real goal is to measure S, the systematic bias in price predictions of the government antitrust agency. The problem is that the analyst only has observations on the actual price change, ΔP, not the predicted price change ΔP*. But the government agencies at the time of the merger decision know (explicitly or implicitly) what is their best prediction of the price change. If we had that information, we could calculate S for each completed merger and then average it across mergers. This would give us a much more precise estimate of S than we could otherwise obtain by doing the fancy econometrics just outlined based on only ΔP.1

A complete analysis

The advantage of the approach just outlined is that it can be expanded into a direct test of the methodologies used by an antitrust agency to evaluate mergers. For example, one can see whether the bias, S, differs across industries. Does the agency do a poor job in high technology industries compared to other industries? Moreover, the merger models used by the government agency could be tested. For example, in some mergers, complicated merger simulation models are used, while in others, simple price-concentration regressions might be used to predict the price effect of the merger. Does one type of approach work better than the other, and, if so, under what circumstances? Merger discussions often hinge on the assessment of the ease of entry or the ability of larger customers to vertically integrate in the face of large price increases. How well does the antitrust agency predict entry and vertical integration decisions?

A systematic study of merger policy should not only be able to answer whether there are systematic biases in an antitrust agency’s price predictions but also assess more generally which of the agency’s methods of analysis work best. A government antitrust agency should keep track of its predictions and methods of analysis and then test their accuracy on consummated mergers. Only when that happens will analysis replace opinion as the basis for judging the effectiveness of merger policy.

Footnotes

1 The details of how to specify an econometric model to estimate S with and without observations on ΔP* is developed in my “Why We Need to Measure the Effect of Merger Policy and How to Do It”, National Bureau of Economic Research paper 14719, forthcoming in Competition Policy International.